Deep Learning Machine Vision

In just a few short years, deep learning software has improved to the point that it can classify images better than any traditional algorithm—and may soon be able to always outperform human inspectors

For many years, pet food manufacturers have used machine vision software to verify the presence of unique characters, codes, colors and graphic shapes on packaging for dog and cat food. Today, however, these companies can complement this process by also verifying the presence of a dog or cat image on the packaging using deep learning vision software.

Unlike traditional image-processing software, which relies on task-specific algorithms, deep learning software uses a multilayer network of neural self-learning algorithms to recognize good and bad images based on those that have been tagged as such by human inspectors. These data sets, which typically contain at least 100 images per defect type, are fed through the network to create a model that classifies objects within each input image and ensures a high level of predictability.

To verify specific animal photos on pet food packaging, the complex neural network must mimic human judgment at several levels after the training phase. Low-layer algorithms check the image for simple shapes, like edges, before higher-layer algorithms focus on more complex features like the face, limbs, paws and tail. Other high-layer algorithms then discern all photo deformations, backgrounds, lighting conditions, viewpoints and obstructions. Finally, top-layer algorithms give a probability of what type of animal is in the image and verify that it’s either present or not on the specific animal-food packaging. All four steps are completed within 0.5 to 1 second.

“Many nodes make up each neural network layer, and each node makes a single decision,” explains John Petry, director of marketing for vision software at Cognex Corp. “Together, they recognize all types of image patterns and make a judgment decision on whether the image is good or not.”

Deep learning software for machine vision has been around for more than a decade, but it is only in the last few years that it has become user friendly and viable. During this short time, manufacturers in several industries have begun to use it in applications as diverse as detecting weld puddling on surgical instruments, verifying the presence of multiple components in car seat assemblies and identifying different defects on a reflective metallic surface.

Software suppliers say these examples represent the beginning of a second machine vision revolution. One where deep learning not only has a positive effect on all aspects of machine vision—such as accuracy, camera performance and lighting control—but also where the technology makes it possible to complete applications that, in the past, were too difficult to do or required too much investment.

Origin and Openness

The concept of deep learning is relatively new for machine vision, but it’s definitely not new for machine learning. Deep learning is a specific type of machine learning, which is one type of artificial intelligence (AI).

Looking for quick answers on assembly and manufacturing topics? Try Ask ASM, our new smart AI search tool. Ask ASM

“Current neural net algorithms used for deep learning are very good, but they aren’t at the level of artificial intelligence yet if you use the Turing test as a barometer,” explains Tom Brennan, president of Artemis Vision, a Denver-based integrator that has used deep learning in a few medical device and pharmaceutical applications.

The Turing test requires that a machine or a technology exhibit behavior equivalent to a human. “AI-level algorithms are those that can directly respond to any question with human-level intelligence,” says Brennan.

The initial deep learning architecture for computer vision was the neocognitron introduced by Kunihiko Fukushima in the 1980s. An artificial neural network, the neocognitron has been used for handwritten character and pattern recognition tasks, and served as the basis for more-complex neural networks that are commonly used to analyze visual imagery.

Open-source deep learning software first appeared in the 1990s, when many key algorithmic breakthroughs occurred. Since then, computer scientists have been able to better harness the vast computational power and data that are essential to making neural

networks work well. Types of open-source software available online include

C/C++ and Java libraries, frameworks and toolkits.

“A decade ago, when deep learning software and related hardware were much less capable, it would take about two weeks to train the software to perform deep learning,” explains Andy Long, CEO of Cyth Systems. “By 2014, it took about two days, and now it takes less than one day.”

Ambitious integrators and manufacturers tend to start out with open source software because it requires no licensing or royalty fees. On the downside, the suppliers provide little technical support, and end-users must carefully classify from several hundred to several thousand data set images before network training can begin.

“The companies that start their deep learning practice with open source software require a real expert in-house, such as an engineering Ph.D.,” notes Petry. “Even then, it could easily take the person six to 12 months to get the software right for the application. There is also the problem of having to redo the software when a different part needs to be inspected or the assembly process changes.”

Brennan says that Artemis has used open-source software for two deep-learning applications. In both cases, Artemis engineers needed to slightly modify and tweak the software by “about 2 percent,” per Brennan, to exactly fit each application.

A Great Fit

Deep learning software is increasing in popularity as manufacturers demand more intelligent, accurate and repeatable vision systems. End-users also like the fact that this software can automatically program vision systems in a matter of minutes.

Deep learning works best for applications involving deformable objects instead of rigid ones. Another good application is verifying the presence of many parts that have color and texture variations within an assembly. Also, whereas traditional software requires a specific tolerance range for the parts being inspected, deep learning is best served by the largest and most clearly marked data set of good and bad part images possible.

While deep learning is commonly thought of for cosmetic inspection applications, Petry says that it is also very good at confirming the presence of multiple items in a kit. For example, making sure that a piece of surgical tubing is part of a medical kit regardless of where the tubing is located or its perspective to the camera.

“Essentially, deep learning is a big exercise in applied statistics,” says Brennan. “The task of each node [in the neural network] is to statistically determine the image data that best correlates to a good or bad part. The neural algorithm isn’t inherently smart, but it has learned to perform preprocessing operations in a certain way to help the software produce results matching what a human told it was correct.”

For many companies, deep learning has moved from the experimental phase to the experiential phase, say suppliers. These manufacturers have learned firsthand that not every application is right for deep learning—and that it’s not the Holy Grail that enables companies to solve all of their vision application problems.

End-users often want to use deep learning for a specific application. Suppliers, however, know that several tests are necessary to know for sure that it’s the best option. Software suppliers say that deep learning software is much more flexible than standard software. Brennan agrees, particularly regarding lighting. He says that deep learning is better at controlling lighting in an image by mitigating its variability.

“Neural net algorithms can discern good and bad images in bright or dim light,” he points out. “They can learn to recognize these lighting differences are not important, and accurately classify good and bad parts.”

Yvon Bouchard, director of technology for Asia-Pacific at Teledyne Dalsa, says that deep learning is mainly used to ensure quality throughout the assembly process, especially for tasks like part finishing and final surface inspection. Sometimes it’s also used to help with “pose estimation,” or estimating the position and orientation of an object. This applies when parts to be assembled may not be fixtured, or part orientation needs to be established before it can be manipulated.

Teledyne Dalsa’s Sherlock 8.0 software is a rapid application development tool that uses conventional image processing functions and has a deep learning option. The company also develops custom software with optimized deep learning models for manufacturers’ unique and demanding vision applications.

“Sherlock software is more appropriate for users that want to do their own training in an environment to simplify the basic vision and deep learning processes,” explains Bouchard. “The key point is that the software allows end-users access to all the standard tools, along with deep learning, to generate a specific solution. In many applications, traditional vision tools perform part of the inspection task and deep learning handles aspects of the inspection that are too difficult to code.”

Sherlock software is compatible with area and line scan cameras that have monochrome or color format imagers. It directly connects to Firewire, GigE and USB cameras.

Cyth Systems introduced the first version of its Neural Vision (NV) software way back in 2008, but it didn’t perform as the company had hoped, due to hardware and technological limitations at the time. By 2014, though, the third generation of NV was developed and proved much more capable to solve complex vision problems. Today, nearly 80 percent of Cyth customers use deep learning in their applications.

Long says these customers include automotive, food, aerospace, white goods and electronics manufacturers. The latter two use deep learning for assembly verification, whereas aerospace companies rely on it to ensure flawless-in-appearance seats and engines.

“A couple years ago, an organic food grower began using our deep learning software in the field to better sort its fruits and vegetables that have excessive color variation,” notes Long. “In the auto industry, one customer uses deep learning to verify that each seat assembly is used with the correct vehicle. Some seats have a microphone in the headrest, and the microphone is circled on each training photo of the headrest so the software knows what to look for.”

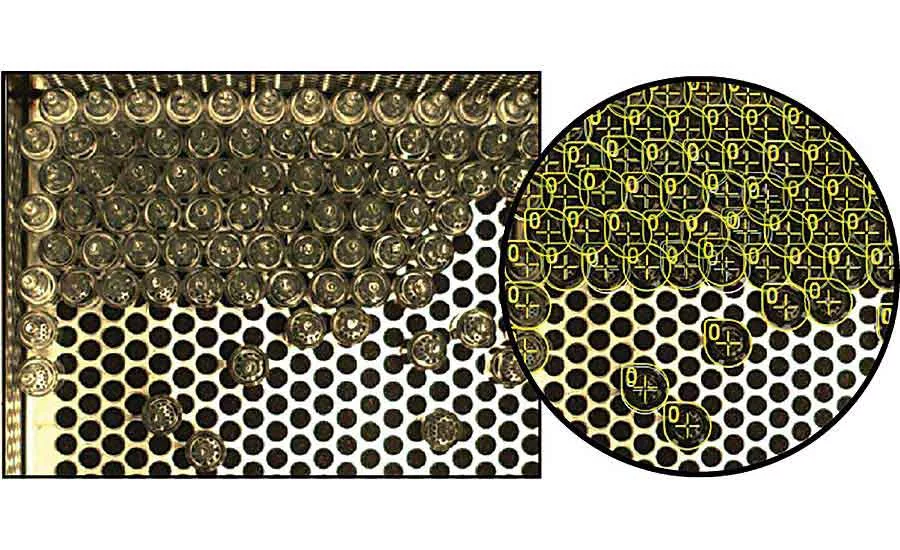

Electronics customers use deep learning for assembling and sorting PCBs, resistors and transistors. Food manufacturers rely on it so ensure that packaging always has optimal aesthetics and contains the correct food product.

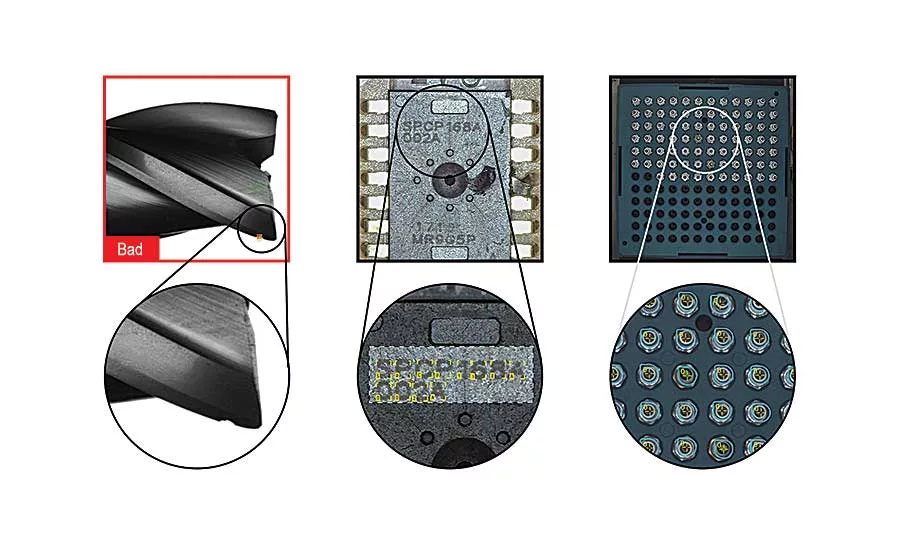

In the medical field, Artemis developed a deep learning application to help a manufacturer detect puddling in a weld that joins a metal pin to the end jaw of a surgical tool. This tool seals off vessels to prevent bleeding.

Welding is done manually on a small, rough surface area, and verified by standard machine vision before the deep learning software is used. Both inspections are performed in a small testing workstation.

Another Artemis project involves using deep learning software to detect tiny defects in glass vials. The pharmaceutical end-user requires flawless vials that hold material without any chance of leakage. Brennan says Artemis turned to deep learning because it is better at locating defects that only show up under lighting at certain angles.

“Deep learning is a great way to assure product quality, such as in applications where a person normally performs some type of inspection,” explains Petry. “It also is very good for verifying an assembly after the whole product is built but before it gets packaged. For example, car headlights, badges and wheels, boxes filled with various types of food or candy, and surgical kits that hold items like stents, tubing and clamps.”

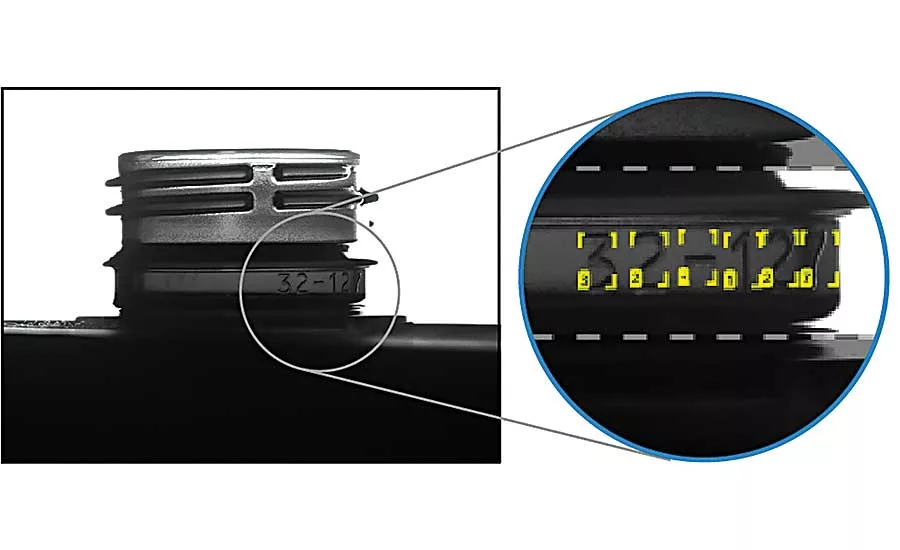

Two years ago, Cognex began offering the ViDi deep learning library, and last year made it available in conjunction with VisionPro, its flagship vision software product. The suite has four basic tools: cosmetic inspection, part location, classification and optical character recognition (OCR).

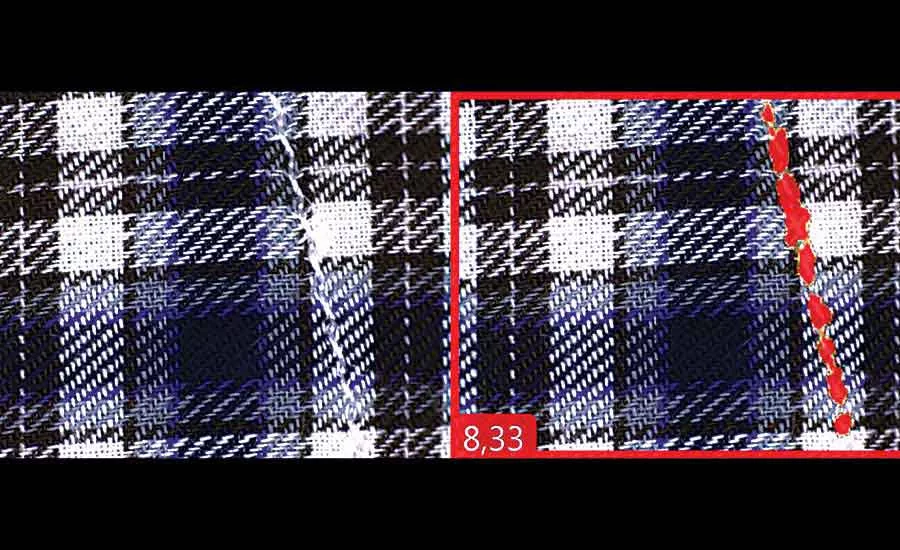

Cognex ViDi reliably reads many challenging date and lot codes, as well as embossed and etched text. It also automatically inspects complex pattern fabrics and identifies defects.

ViDi Blue-Locate algorithms locate parts, count translucent glass medical vials on a tray, and perform quality control checks on kits and packages. ViDi Red-Analyze segments defects or other regions of interest by learning the varying appearance of the targeted zone.

ViDi Green-Classify identifies products based on their packaging, or classifies acceptable or unacceptable anomalies such as the quality of welding seams. Finally, ViDi Blue-Read deciphers badly deformed, skewed and poorly etched codes using OCR. Its pretrained font library identifies most text without additional programming or font training.

One Teledyne customer recently used deep learning software to solve a problem it had with an automated assembly process involving small screws. The company periodically faced downtime due to screws not mating properly, resulting in a cross-threaded situation where the screw got jammed part way into the assembly.

“Although some traditional software can inspect screw thread characteristics, the problem in this case is with the screw tip that has already undergone threading on the main body with a die and conical tip head,” notes Bouchard. “Deep learning is a better option because the transition zone at the tip can have an infinite number of possible shapes. The vision system can be shown thousands of good and bad examples of screw tips, making it easier to quickly decide whether it is a good or bad one.”

Changing Challenges

Deep learning poses challenges to end-users that are not easily resolved with traditional vision software. According to Bouchard, most users lack the understanding of what is required to achieve success with deep learning.

“By far, the dominant problem is the lack of high-quality, properly classified images,” notes Bouchard. “Typical deep learning applications require hundreds or even thousands of image samples. In more difficult cases, or custom applications, the training model may require up to a million or more image samples.”

Long says that manufacturer expectations of deep learning is a mixed bag of idealism and realism. This is why he explains its limits and basic process upfront with each client. Cyth also does a vision study of each application to see if it truly is a candidate for deep learning.

“The company sends us the parts to photograph, and we generate 50 to 100 good and bad images of each part,” Long explains. “After our testing, we let them know the success probability of deep learning based on percent of false negatives and false positives. Too many false negatives is annoying, but too many false positives can lead to product quality problems.”

Unlike other software, Cyth’s Neural Vision platform captures images from production environments and sends these tagged data sets to the Cloud for offline processing. The images are then sent back down to a PC and the software gets trained for deep learning inspection of parts on the assembly line.

Long says the images are captured by infrared, 3D, line scan or smart cameras. The software only needs 25 milliseconds to analyze an image and decide if the part is good (green check) or bad (red cross).

According to Long, anyone with product knowledge can train the system to function, and it consistently

provides repeatable results. The software also lets end-users easily roll out new applications, reference old ones and access all inspection results for statistical analysis.

Inspekto’s S70 autonomous machine vision system uses an array of deep learning engines as part of its Plug and Inspect software. It can quickly (30 to 60 minutes) and cost-effectively be installed and set up, without the need for an integrator or artificial intelligence expert at any stage. The compact system includes an advanced vision sensor and lens, a lighting apparatus and a set of adjustable arms.

End-users do not need to set any quality assurance parameters, as the system autonomously adapts itself to the inspected item. Furthermore, because the system is integrative to the production line and robust enough to be undisturbed by any surrounding and environmental influences, it eliminates the need to put special structures in place.

The system is already in plants across Europe and is inspecting hundreds of thousands of products daily for leading automotive parts manufacturers like Mahle. It offers a growing variety of applications, including full archiving and traceability, and is accurate enough to eliminate the need to take products offline for inspection, according to Yonatan Hyatt, chief technical officer at Inspekto. In addition, the system can be used on manual assembly lines to ensure that an operator correctly performs every task.

“End-users of non-autonomous machine vision systems have no direct interaction with the visual quality assurance solutions [that integrators] develop for production lines and are [likely to] have limited expectations from contemporary deep learning software,” says Harel Boren, CEO of Inspekto. “But, they do hope that the software [provides] the solutions they are promised by integrators. Or that autonomous vision systems using arrays of deep learning engines will solve their problems outright.”

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!