Driven by data

The twin technologies of big data and machine technology will have to work together in order to propel autonomous vehicle development forward, and industry players from automakers to chipmakers are gearing up for a long and winding road.

Artificial intelligence (AI) and machine learning have become vital tools for the production of next-generation automated vehicles, particularly because of the need to recognize and react to the nearly infinite number of scenarios encountered on real-world roads.

The further along the process the industry gets, the more it learns about what it doesn’t understand and what it hasn’t thought through yet, but that hasn’t stopped automakers, component suppliers, trade bodies, and analysts from tackling the enormous challenges of the data-driven autonomous vehicle.

Bryan Reimer, Ph. D., a Research Scientist in the MIT Center for Transportation and Logistics, a researcher in the AgeLab, and the Associate Director of The New England University Transportation Center at MIT, said machine learning and big-data analysis will be used both in the training of autonomous vehicles and be integrated into vehicles themselves so they learn as they drive.

“It is clear that machine learning and big data have an important role in advancing the capabilities of automated vehicles,” he said. “As automated vehicle technologies evolve, one would expect that algorithms that were once embedded in the vehicle in production will be replaced more frequently by algorithms that are learning over time.”

He noted it’s also possible that one would see a mix of algorithms competing as part of the decision process for creating better solutions; these algorithms could eventually receive additional capabilities through over-the-air (OTA) updates, similar to the way your smartphone does today.

“Automakers are going to need to find new and faster ways of testing of algorithms built on learning models,” he said. “These algorithmic approaches offer a good deal of promise, but will need to undergo a degree of rigorous testing that is greater than some systems we see on the market today to ensure that changes do lead to expected improvements.”

An OEM like Tesla, with its in-house approach to self-driving tech, won’t have much of a long-term advantage over automakers that will rely on third parties like Nvidia, Mobileye, or others, Reimer said.

Looking for quick answers on assembly and manufacturing topics? Try Ask ASM, our new smart AI search tool. Ask ASM

“I think the organization has still not come to grips with the complexity of developing robust Level 4 or Level 5 systems that meet consumers demands for mobility,” he said. “A good portion of the rest of the industry seems to have begun to embrace much longer timelines.”

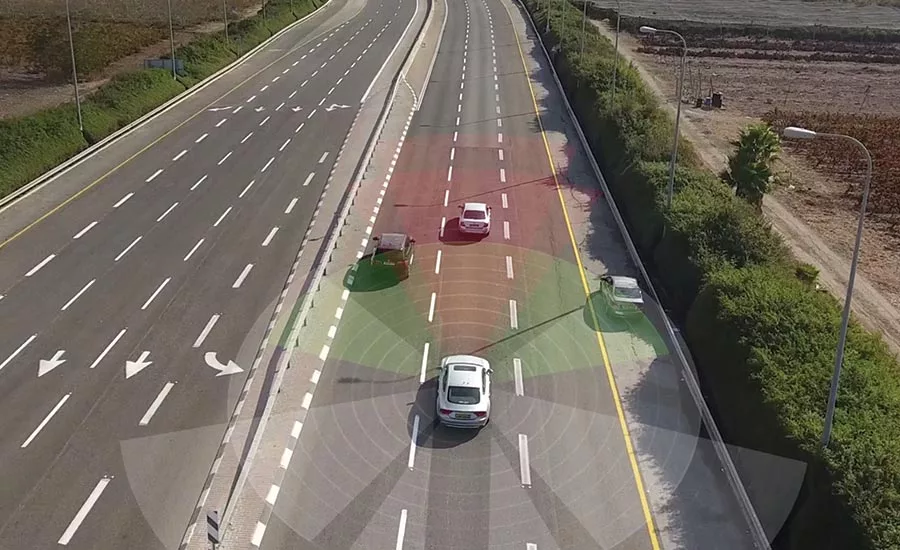

Reimer noted that as telemetry gets stronger, there are great opportunities for machine learning to help improve sensing, path planning, and other key functions needed to support all levels of automated mobility.

Training the brain

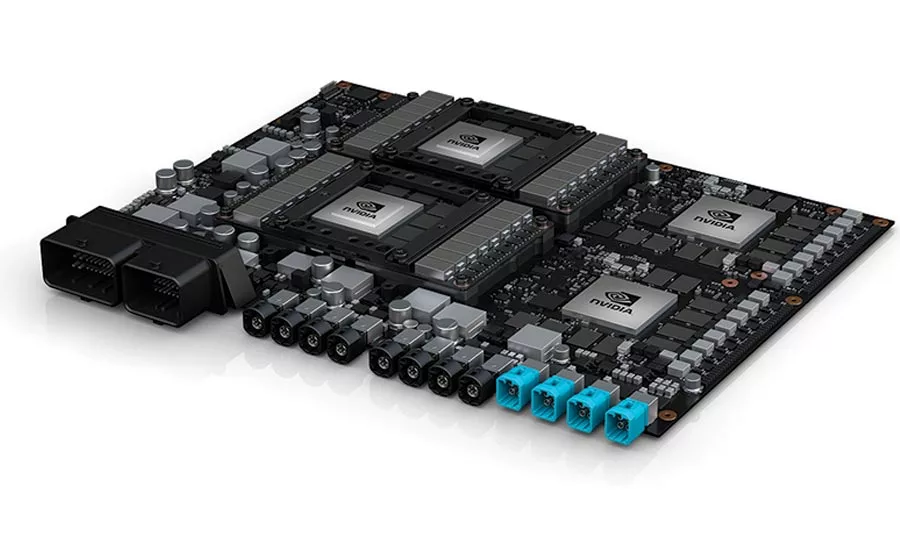

Development of the machine-learning technology will require partnerships between multiple players, explained Dr. Wolfram Burgard, Vice President of Automated Driving Technology at the Toyota Research Institute (TRI), including ones with chipmakers like Nvidia.

In March 2019, Toyota Research Institute-Advanced Development (TRI-AD) and Nvidia announced a collaboration to develop, train, and validate self-driving vehicles.

The partnership includes work to advance AI computing infrastructure using Nvidia GPUs and the development of an architecture that can be scaled across many vehicle models and types, simulating the equivalent of billions of miles of driving in challenging scenarios.

“Because of latency and the fast reaction times the vehicle will require to operate safely, our idea is that the computations will actually be in the car and reacting to sensor input,” Burgard said. “In this context, we are trying to update and improve the brain as more data and experiences arise.”

Because data models typically grow, this means both the software and hardware need to grow too, something Burgard said carmakers will have to take into consideration as they develop future vehicles, for which computer power will be scalable over time.

Toyota is planning future vehicles that will have sensors in the car that can be used for learning models and “training our brain,” as Burgard says.

“That is something we will see in the next couple of years for ADAS systems at Toyota,” he explained. “We are trying to continuously improve models, and this is where the capabilities in the car are going to depend on the power of the brain within the car.”

Going forward, he explained Toyota’s strategy is to deploy fleets of vehicles that will go out and collect data in real-world scenarios, then send that data back to a central databank where machine-learning algorithms can then add those scenarios to further extend driving and decision-making capabilities.

“Right now, many companies rely on manually labeled data, but that is a model that doesn’t scale because the cost of manually labeling this increasing amount of data would be enormous,” said Burgard. “We are looking into ways of learning that include semi-supervised methods where the algorithm can autonomously learn from data as it arrives.”

From there, TRI can develop better decision-making processes in the car and think strategically about future developments and innovative capabilities.

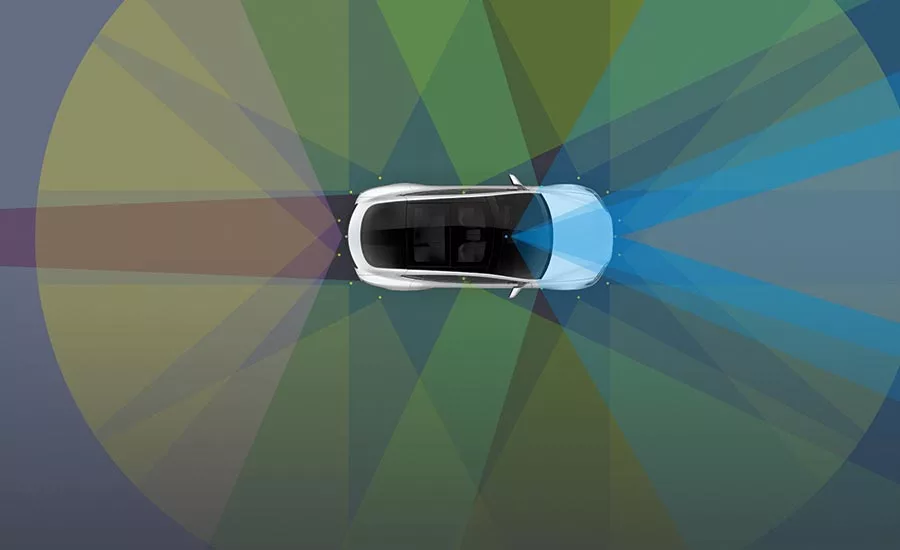

“Let’s assume you add an additional sensor in the car—a LiDAR, for example—how can we use the data to improve functionality?” he asked. “In the end, you want the vehicle to make the optimal decision, and it’s our responsibility to make sure that technology is safe. This is a big concern at Toyota.”

Roads more traveled

There’s also the questions of how to use and test machine learning and big data most effectively during the training process, something BMW is currently working on.

“In the media it is often stated that the more kilometers you drive and store, the higher the safety of the automated system,” he said. “Since we develop driver-assistance systems in house, we know that this statement is not entirely true. It should be reformulated to: The more relevant kilometers you drive and store, the higher the safety of the autonomous system.”

He explained that BMW begins quite early to define relevant, challenging scenarios the company knows would pose problems to the car’s perception and reasoning system. The amount of data based on scenario-driven data collection is smaller by a huge magnitude compared to an approach where data are gathered based on a kilometer measure alone.

“Of course, the dataset increases with increasing camera, radar, and LiDAR resolution, but since we focus on relevant scenarios only, the amount is kept within manageable limits,” he pointed out.

Furthermore, most of the data stored are generated by simulation: This means even though the amount of low-level data, like raw camera, radar, or LiDAR increases, the amount of high-level data, like extracted object or lane information, stays constant per situation.

To process the data in the car, BMW works with companies that provide high-performance electronic control units (ECUs) that can handle the increasing amount of data.

Roskopf, like others, noted the physical challenges to data processing and storage in vehicles are not so great, but rather its the expense of data storage and processing.

“We don’t store that much data in the vehicle,” he explained. “We already leverage the capabilities of our big-data platform by continuously processing the recorded data to generate reference data, often called ground truth and compute KPIs. We further leverage its compute power to simulate scenarios that we are not able to perceive in the real world.”

From this, Roskopf’s team is able to test a new software release prior to a test drive on a selected dataset that contains challenging real-world situations.

“As a result, we get test results quicker with much less driving effort,” he said.

More human than human

For all companies, like Nvidia, the goal is to create a system that would outperform a human.

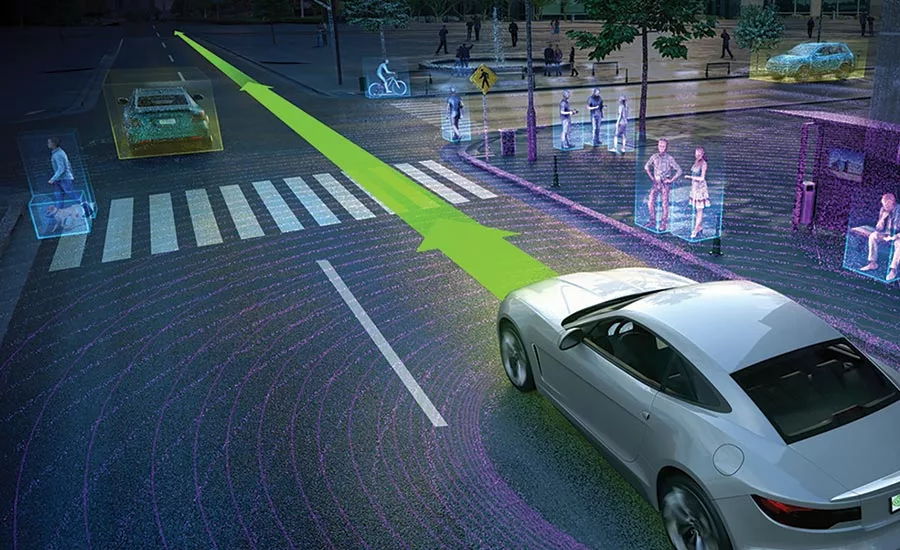

“That means taking all kinds of data and making decisions,” explained Danny Shapiro, Senior Director of Automotive for Nvidia. “In the self-driving car, we want to replace the human, who has eyes and ears, and those senses go to your brain, and your brain analyzes that information.”

In this case, the AI brain is the Nvidia Drive platform; it needs to take all of these data, which really doesn’t have much meaning—in the end it’s just a bunch of pixels and numbers—and somehow make sense of it all.

“Your brain has been trained to see a red fire truck, and based on experience you know what that is,” he said. “We need to teach the AI computer what that information means.”

That means first training it to recognize things using deep neural network (DNN) algorithms trained to mimic the synapses of the human brain that analyze groups of pixels and form edges, boundaries, shapes, and colors; these network algorithms can work together to recognize these images.

“Data is the key to writing the software, not humans sitting in front of a keyboard,” he said. “The old method of training a computer to recognize a stop sign would be someone writing a lot of code—lines for color, the octagonal shape, time of day, and weather.”

To train a machine-learning algorithm, on the other hand, what you have to do is gather up a lot of pictures of stop signs, at all different types of day, and tell the computer these are stop signs.

That training, which happens in the data center, is a very intensive process.

“In autonomous vehicles, it’s a very complex problem, because there are so many things a self-driving car can see it needs to recognize, and unpredictable behavior we need to predict,” Sharpiro explained. “It’s a combination of neural networks that improves the safety of the system, which allows for cross referencing and multiple ways of achieving the same goal.”

Building a business model too

Beyond the challenges behind the sheer number of possibilities that have to be included in the training—a mattress falling off the back of a truck, recognizing a jumping deer versus a slow-moving cow—there’s also the issue of another type of model: the business model.

“The biggest blocker I see to delivering more in-depth data insights are business models allowing data to be shared,” Richard Porter, Director of Innovation and Technology for the UK’s self-driving industry trade body Zenzic. “When we look at the tech to store, move, and process data—it’s there.”

The main issue as Porter sees it, is that, in the automotive market, companies aren’t used to sharing data among themselves, and that attitude is the biggest commercial challenge to more data sharing.

“They’re worried if they release their data they will have a competitive disadvantage,” he said. “Automakers needs to come together to try to figure out what the business model is to incentivize organizations to share data.”

Autonomous vehicle projects must first overcome issues around licensing, allowing data to be made available without having to resort to multiple contracts.

“That should unlock vast amounts of data that these algorithms need to work,” Porter said. “For me, it’s not really about the technology, but about unlocking those business models. If those exist, the other challenges will melt away quite quickly.”

Mike Ramsey, Senior Research Director, Automotive and Smart Mobility at Gartner said at first, the car itself won’t be doing much learning; that has to be done at a fleet level where huge volumes of data are transmitted back to a central data center and analyzed.

As the volume of data from multiple sensors and multiple vehicles continues to grow, Ramsey said decisions will need to be made as to how much data is going to be transferred to the cloud, analyzed, and then transmitted back to the vehicle—or fleet of vehicles, as the case may be.

“If there is some kind of event that required evasive maneuvers, that clip is going to be uploaded and brought into the fold, labeled as a new scenario—something the vehicle failed to recognize correctly,” Ramsey said. “The vehicle will be set up to say there’s an unusual event, record the reaction—deceleration, for example—and that data will be sent to the cloud, and then an improvement will be made and distributed to the fleet.”

He compared the early models to 16-year-olds who have just gotten their driver’s license and have had limited real-world driving experience.

“They’re not great drivers at first—but they learn. As autonomous vehicles log more miles, they’ll be generating a huge volume of information and uploading it to the cloud.”

Ramsey noted, however, that the big problem with machine learning and AI for training is that there is no supervision.

“There is no human monitor over the 10 million images fed into the computer to teach it something; you don’t really know how it came to that conclusion,” he pointed out. “If it fails, we don’t know how to fix it either.”

The road ahead

When you’re talking about a two-ton machine speeding down a highway at 65 miles per hour (or faster) surrounded by a flotilla of similar high-velocity machines, the car’s ability to make instant—and correct—decisions is of paramount importance.

“If Facebook suggests a face tag on somebody and it’s not correct, it’s no big deal. If Netflix recommends a movie you don’t like—thumbs down. In a car, it’s making critical decisions every fraction of a second, so we are continually fine tuning the algorithms so the accuracy is as high as it can be,” Shapiro said.

He predicted over the next couple of years that there will be all kinds of deployments, ranging from geo-fenced areas to dedicated autonomous vehicle lanes on highways, but he also acknowledged the timelines have shifted.

“The complexity and number of computing scenarions has been underestimated—even by us,” Shapiro said. “Sensors are becoming sharper—there’s more and more data constantly being collected. The vocabulary of the self-driving car will continue to expand. The software will never be done.”

Regardless of the way the algorithms work, the ways in which the data is collected, processed, stored, and shared, the watchword will always be safety. This means continuous testing of edge cases, creating ever more complex virtual worlds and massive amounts of data ingested by data centers, fed into algorithms, and layered into smarter and faster neural networks.

“Nothing is perfect in this world. We believe that autonomous vehicles will dramatically make our roads safer, so we need to bring this technology to market,” Shapiro said. “It’s hard to say they’d ever be 100 percent perfect. But the key thing here is that we are all working to make it as perfect as we possibly can. And we still have more work to do.”

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!