The Big Data Dilemma

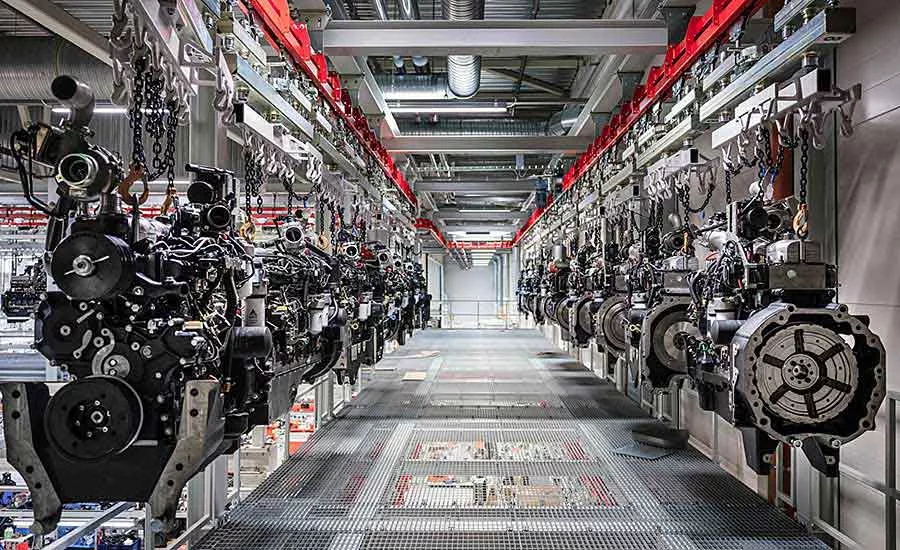

The “D” word has become one of the hottest trends in the manufacturing world. State-of-the-art sensors attached to assembly tools and production equipment are capable of collecting a constant stream of data.

The challenge for manufacturers is how to analyze that vast amount of data to gain a competitive advantage. Companies that figure out how to apply it can improve quality, increase productivity and yield, reduce costs and optimize supply chains.

Data-driven operations are critical to the future of manufacturing. However, many manufacturers are currently struggling to grasp the full value that data and analytics can unlock.

According to a recent study conducted by the Boston Consulting Group (BCG) and the World Economic Forum, nearly 75 percent of surveyed manufacturing executives consider advanced analytics to be critical for success and more important today than three years ago. However, only a few companies capture the full value that data and analytics can unlock to help address manufacturers’ most pressing challenges.

Less than 20 percent of surveyed participants prioritize advanced analytics to promote either short-term cost reductions or long-term structural cost improvements. Only 39 percent have managed to scale data-driven use cases beyond the production process of a single product and thus, achieve a clearly positive business case.

“Manufacturing is on the verge of a data-driven revolution,” claims Daniel Küpper, managing director and partner at BCG who coauthored the report. “But, many companies have become disillusioned because they lack the technological backbone required to effectively scale data and analytics applications. Establishing these prerequisites will be critical to success in the post-pandemic world.”

Numerous Benefits

Data analytics can be used by manufacturing engineers to help answer important questions, such as:

- How is my asset performing now?

- How effective is my production process?

- How did it perform in the past?

- What contributed to its performance?

- How is performance changing and trending?

- How will it perform in the future?

- What action should I take and when?

A recent report conducted by ABI Research claims that manufacturers will spend $20 billion to transform and support data analytics by 2026.

Looking for quick answers on assembly and manufacturing topics? Try Ask ASM, our new smart AI search tool. Ask ASM

“Future factories are expected to have flexible and adaptable manufacturing lines that operate with greater autonomy, integrated closed-loop quality control, and connected workers to improve effective response to changes in supply and demand as they occur,” says Ryan Martin, research director at ABI Research.

“The main challenges manufacturers are facing in today’s post-COVID environment are increasing costs coupled with fluctuations in demand, constraints on availability of materials and access to a skilled workforce,” adds Simon Coombs, managing director of digital manufacturing and operations at Accenture Industry X. “These challenges make it more important than ever to improve visibility of performance metrics and increase flexibility to respond to market and supply-chain dynamics.

“Companies are moving from simply analyzing historical data to adjusting based on real-time insights and guidance,” Coombs points out. “Assembly is all about planning the work and then executing against the instructions.

“Manufacturers need real-time data analytics because it can supercharge a number of critical activities,” claims Coombs. “[Benefits include] managing the availability and sequencing of parts, effective testing, quality management, providing traceability and reducing inventory, while improving throughput and flexibility. That way, data analytics can help optimize what is already in place and bring in new insights to move toward optimized ways of working.”

Data Explosion

Data collection driven by edge computing, the Industrial Internet of Things (IIoT), sensors and other smart technologies are infiltrating every aspect of the factory floor. But, while there is value in data, not all data collected is valuable to a business.

“Much of the data collected from smart machines is so large that it is not feasible to move it from its source to the cloud, and the data that does need to be moved can take days to upload,” says Jeffrey Ricker, CEO of Hivecell, an edge-as-a-service company. “By 2025, 75 percent of data will be processed outside the traditional data center or cloud.

“Manufacturers should be looking [for] edge computing power that offers easy-to-deploy, future-proofed, technology agnostic solutions that can empower them to scale infinitely and save massive amounts of resources in managing and processing big data,” notes Ricker. “[Companies] solely relying on the cloud are missing an opportunity to analyze data on-premise and make smarter decisions.”

Some manufacturers have anxiety surrounding data analytics, due to the sheer amount of data being collected by smart machines on a minute-by-minute basis. Often, the idea of analyzing so many different data streams can sound like an overwhelming and expensive problem, especially to smaller companies.

As Industry 4.0 continues to become the standard, manufacturers that are not making the most of their data will be at a competitive disadvantage.

“The volume of data has increased in recent years, and data analytics will continue to become more important to manufacturers in the future,” says C.V. Ramachandran, operations improvement and digital transformation expert at PA Consulting. “However, only about 20 percent of manufacturers are using data analytics to improve efficiency, increase uptime and reduce downtime.

“Up to 90 percent of data generated in a manufacturing plant typically doesn’t get used to build insights that can really help the business,” claims Ramachandran. “Traditionally, there’s a lot of emphasis on historical reporting, which focuses on what happened in the past. There is much less emphasis on using data to see what will happen in the future.”

“Manufacturers typically want to see all data, but on average we see approximately 90 percent of data get wasted or unused,” adds Alok Sahu, smart factory leader at Fujitsu Ltd., a leading provider of communication and information technology. “Often, data is not contextualized properly, so when it gets stored it never ends up seeing the light of day again.”

According to Angie Sticher, chief operating officer at UrsaLeo, an enterprise software company, terabytes of captured data typically ends up being wasted or goes unused in manufacturing plants.

“We now live in a world where the IIoT is exploding exponentially and large amounts of data are being collected every single day,” explains Sticher. “Unfortunately, there isn’t a clear way to sort through or analyze it. This problem has made many organizations data rich and information poor.”

Underused Data

A recent report from Forrester Research Inc. claims that up to 73 percent of all data collected within an organization goes unused.

“This has become an oft-repeated statistic, highlighting a fundamental and growing challenge within IoT,” says Keith Flynn, senior director of product management at Aspen Technology Inc., a company that specializes in asset optimization software. “As the volume of connected devices continues increasing exponentially, [companies] are gathering more data than they know what to do with. However, that 73 percent statistic is a little backwards.

“The problem is not that 73 percent of data is going unused,” notes Flynn. “It’s that enterprises are collecting large volumes of data that might be of no use to begin with—a problem that is getting worse. By 2025, it’s estimated that there will be nearly 37 billion connected devices worldwide, generating over 79 zettabytes of data.”

Today, manufacturers in all industries are under tremendous pressure to assemble the highest quality products while reducing costs. To be successful, they need situational awareness and continuous intelligence to improve decision making, detect anomalies and remove waste out of the production process.

“The ever-increasing number of connected devices and sensors is creating a colossal amount of data to process, analyze and store,” says Przemek Tomczak, senior vice president of IoT at KX Systems Inc., a software company that specializes in streaming analytics. “Most legacy and current software is unable to keep up with these volumes and analytics demands.

“We are seeing advanced process control systems and historians struggle with the bottlenecks derived from this new abundance of data and demands to provide this data to engineers, data scientists and management,” explains Tomczak. “As a result, when deploying systems to detect anomalies and make predictions, organizations can face data bottlenecks, slowing response times and resulting in inefficient action on the [plant] floor.

“Harnessing data to understand and optimize machine use and maintenance can serve as a boon to [factory] workflows and output,” claims Tomczak. “Whether navigating repair schedules for a fleet of machines to ensure they are optimized for large production runs or understanding even the minor operational corrections with a machine, real-time data comprehension and analysis is key to maximizing operations.”

Mistakes to Avoid

Unfortunately, there is some confusion about what data analytics is and how it applies to manufacturing. There is also widespread fear of making investments in new technology and not realizing a return.

“There’s confusion as to how much data and analytics is enough, and how fast it really needs to be for making a meaningful impact to the business,” says Tomczak. “For example, even though real-time data and analytics continues to be an important area of focus and investment, there is a large variance around what ‘real time’ means.

“Only one-third of organizations surveyed define real time as a second or faster, and nearly half believe it means anything up to an hour or longer—even up to a few days,” Tomczak points out. “To address this confusion, it’s important to always link a data analytics initiative to business outcomes. [Engineers should] be able to explain or demonstrate how a recommendation was arrived at using the organization’s use cases and calculating return on investment results.”

“[Some manufacturing engineers] underestimate the time it takes to make sure you have good data,” adds Chris Nicholson, CEO of Pathmind Inc., a company that applies artificial intelligence technology to industrial operations. “Data is a representation of the underlying realities.

"You need to make sure that the data properly reflects those realities, that you are observing the metrics that matter and that the data is clean,” warns Nicholson. “If you don’t get that right, the best algorithms in the world will not give you insights.”

“Even though data access is the biggest challenge, the biggest mistake is to start with data collection,” says Jon Parr, senior manager for digital manufacturing and operations at Accenture Industry X. “General initiatives to ‘get connected’ and collect all possible data are a sure road to high cost and frustration.

“Companies that focus on specific use cases with clear value benefits, collecting just the right amount and quality of data that is needed, are likely to deliver the most value and to scale their [data analytics initiative] faster,” claims Parr.

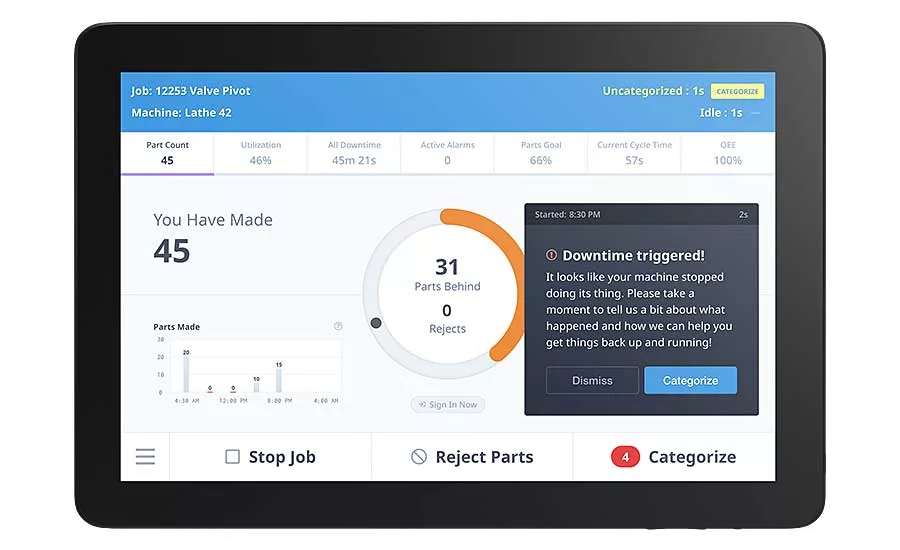

Graham Immerman, vice president of marketing at MachineMetrics, says he sees engineers make two big mistakes with data. “The first is not having a process for taking action on data,” he points out. “Using data to get a better idea of what is and isn’t working on the [plant] floor is half the battle. The next step is to realize value by making actual improvements. This is the hard part.

"The second mistake is not baselining data,” says Immerman. “Many companies are so eager to get started that they often skip this critical step. Unfortunately, without having a baseline to measure from, it becomes difficult to know how much value various efforts may create. Stepping on the scale is a critical first step to knowing how much you’ve actually improved.”

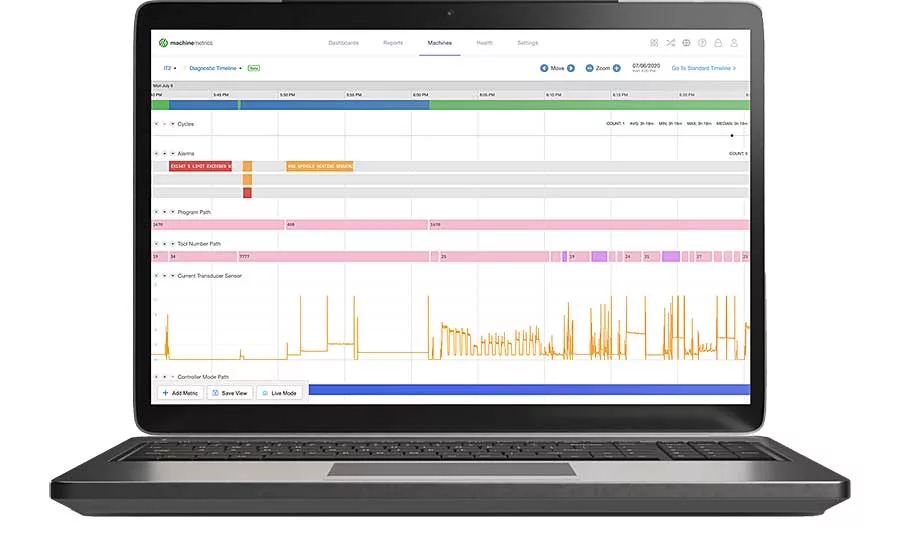

To address those issues, MachineMetrics recently launched a product called Predictive, a technology that leverages high-frequency machine data to supercharge predictive analytics capabilities without the use of external sensors. It features three basic components.

“The first is the ability to gain unprecedented fidelity with plug-and-play high frequency data collection,” explains Immerman. “When data is collected at a higher frequency, the fidelity provides unprecedented visibility into equipment problems that were essentially invisible before. This data can then be immediately used as inputs to time-series or machine learning models to predict machine failures.

“Once developed, [engineers] can then deploy and manage these algorithms to [our] edge devices that process and analyze at the source to detect potential failures in real-time,” adds Immerman. “Finally, when an algorithm is triggered, [engineers] can deliver operator actions via alerts and notifications, or automate an action on a machine that stops and adapts [it] prior to equipment failure.”

“The fourth industrial revolution is data driven,” says Axel Schmidt, senior communications manager at ProGlove Inc., a company that specializes in wearable bar code scanners. “Data is the fuel that determines if, how and where you succeed.

“[One] question is whether you can afford to be inefficient about what you do with your data points and the analytics you want to apply,” notes Schmidt. “Chances are you will be more successful with less effort if your decisions and initiatives are based on reliable analytical data.”

According to Schmidt, some manufacturers are reluctant to invest in data analytics technology. “Many organizations may be hesitant to go forth with their initiatives, because they assume they will need the latest technology and machinery,” he points out. “In reality, there are ways to hook up legacy applications to the IoT.

“Another common misconception is to focus only on your infrastructure,” warns Schmidt. “You need to include the human worker to get the full story. In other words, you need your enterprise applications to provide a top-down view, but you also need the bottom-up perspective. It is like drilling a tunnel through a mountain. You are much faster if you start from two ends.

“Data analytics is not about implementing a [technology] and forgetting it,” claims Schmidt. “It is more about establishing a process. It is about collecting information and contextualizing it to receive actionable insights.

“It is mandatory to have the data at your disposal in real time,” says Schmidt. “But, you also need to be able to break it down and compare it to past initiatives. At the end of the day, data is what you make of it.”

New Algorithm Can Solve Big Data Problems

In today’s world of big data, learning from the vast amount of information collected every day is critical for manufacturers. Often, data collected from IIoT machines on an assembly line is sent to a remote computer in the cloud for analysis and storage. But, if the network connection fails, there can be big problems.

A new algorithm developed by engineers at the State University of New York at Buffalo solves that problem.

“Designing algorithms that can learn from data is crucial for businesses,” says Haimonti Dutta, an assistant professor of management science and systems. “Our model allows devices to communicate with one another—making them robust against network failures—while enhancing the quality of information for decision makers and doing it several orders of magnitude faster than other [alternatives].”

Dutta conducted extensive computational studies using seven publicly available, real-world data sets to validate the performance of the model. She discovered that her results were 1.5 times faster than other similar algorithms. She also used it to predict mechanical failures at a food manufacturing plant using more than 1 million points of data.

“This case study showed that organizations can use internet-connected devices for much more than collecting data,” explains Dutta. “Our algorithm can be used in devices where speed is critical for real-time prediction and learning, like early identification of anomalies that can lead to defects, and applying strategies that allow devices to adapt and optimize themselves.”

Soon, ‘Small Data’ May Be Big News

Although ‘Big Data’ has been big news in recent years, that may be about to change. Gartner Inc. predicts that by 2025, 70 percent of organizations will shift their focus to small and wide data, providing more context for analytics and making artificial intelligence less data hungry.

“Disruptions such as the COVID-19 pandemic is causing historical data that reflects past conditions to quickly become obsolete, which is breaking many production AI and machine learning models,” says Jim Hare, distinguished research vice president at Gartner. “In addition, decision making by humans and AI has become more complex and demanding, and overly reliant on data-hungry deep learning approaches.”

In the future, new analytics techniques, such as “small data” and “wide data,” will become more common. “Taken together they are capable of using available data more effectively, either by reducing the required volume or by extracting more value from unstructured, diverse data sources,” explains Hare.

Small data is an approach that requires less data but still offers useful insights. It includes certain time-series analysis techniques or few-shot learning, synthetic data or self-supervised learning.

Wide data enables the analysis and synergy of a variety of small and large, unstructured and structured data sources. It applies X analytics, with X standing for finding links between data sources, as well as for a diversity of data formats. These formats include tabular, text, image, video, audio, voice, temperature, or even smell and vibration.

“Both approaches facilitate more robust analytics and AI, reducing an organization’s dependency on big data and enabling a richer, more complete situational awareness or 360-degree view,” says Hare. “[Both techniques can be used to] address challenges such as low availability of training data or developing more robust models by using a wider variety of data.”

According to Hare, potential applications for small and wide data include “adaptive autonomous systems, such as robots, which constantly learn by the analysis of correlations in time and space of events in different sensory channels.”

For more information on smart factories and data analytics, read these other ASSEMBLY articles:

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!