Machine Vision: Color Vision for Inspection

This is so commonplace that we are only aware of it when we see something wrong: a black pea among the green ones on a dinner plate, a wrong color button on a suit, or the wrong suit for the occasion. Because our attention is drawn to imperfections, even minor ones can have an immense impact on an item's value. Consider the potential liability of a fabric manufacturer that interchanges two fabrics on a sample card used by the interior decorator for a large hotel chain.

When we ask humans to verify correct assembly of an object, whether a pizza, a suit or a printed circuit board, we rely on their ability to recognize color for efficient, reliable decisions. For example, it's much easier to detect the presence of a copper ring in a color image than in a monochrome image.

The automobile industry uses color vision in many applications. One of the most common is to inspect fuse blocks to ensure correct placement of color-coded fuses. But, there are many more potential applications.

Modern automobiles are essentially built to order. Successive cars on a line may have different colors, interiors, instrument panels, door panels and trim. Manufacturers rely on Tier One suppliers to deliver components to the assembly line in the exact order in which they are to be used. The consequences of not having the right component available, or installing the wrong component, can be costly. Extensive use of bar coding throughout the process ensures that each part arrives where and when it's needed. But, if the bar code contains the wrong style and color information, the consequences could be disastrous.

This was the challenge faced recently by a manufacturer of sun visors. A color-based inspection system saved the day. The company produces a variety of slightly different sun visors in the same workcell. The visors can be several different colors and are made for the left or right side of the vehicle. Some visors have vanity mirrors, and some of the mirrors may be lighted. Additionally, each visor may have a different label, depending on the destination country. The inspection system identifies all these characteristics and prints a bar code label with the correct information.

Man vs. Machine

Although inroads have been made in automating color-based assembly verification, manufacturers still rely on human inspectors, especially when subtle differences are involved. This is reasonable. If a trained human inspector with good color vision cannot see the problem, consumers are unlikely to notice it either.Human beings are wonderfully flexible. Even if we have no special training, we're ready to inspect an object after seeing just a couple examples with the correct colors. Some reference examples can be stuck to the wall in case we need a refresher.

Of course, human inspectors have several drawbacks. One is we tend to be expensive. Another is that no matter how dedicated we are, few of us can maintain the concentration necessary to perform such a boring task for extended time periods. And, should a family crisis or daydream about our vacation intrude, we can become downright unreliable.

Looking for quick answers on assembly and manufacturing topics? Try Ask ASM, our new smart AI search tool. Ask ASM

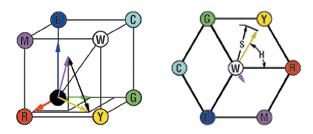

For most practical recognition purposes, machine vision systems represent colors as vectors in a three-dimensional "space." Color machine vision analysis starts with the fact that the normal human eye relies on the differential response to three pigments, each sensitive to a different portion of the electromagnetic spectrum, to provide us with the sensation of color. Therefore, any single color visible to a human can be represented by three numbers.

We engineers are comfortable with sets of three independent variables. We call them vectors, and we know how to identify them with a three-dimensional space. We have algorithms for measuring the distances and angles between vectors and for converting vectors from one coordinate space to another depending on the requirements of the problem. We also have formulas that use standard deviation and variance to represent our uncertainty about individual values, and we have correlation coefficients to measure similarity.

We're told by textbooks and machine vision manufacturers that our familiar vector techniques can be applied to comparing colors. We know that every pixel of every image displayed on our computer screen consists of one of these vectors just waiting to be processed. So we may wonder how there can be any problem performing color-based recognition. It should be easy enough to compare two color vectors by comparing their individual components, or by measuring the distance between them in the three-dimensional space. If they are identical the distance will be zero. To allow for uncertainty in measurements, we can measure the standard deviation and set a threshold based on this standard deviation for differentiating colors. We can also reduce the uncertainty in any color measurement by taking the mean of nearby pixels representing the same color (perhaps forgetting that the objective of the process may be to determine whether these same pixels actually represent the same color).

Indeed, the traditional methods discussed above are applicable to individual pixels and groups of nearby pixels representing the same featureless color field under ideal uniform lighting conditions.

The particular space we choose to represent a color may vary with the problem at hand. One of the most common spaces is RGB (red, green, blue). The three components of the vector may be identified with the level of excitation of each phosphor of a CRT monitor necessary to generate the desired color, or the amount of current that would be generated by a pixel in a CCD camera after incident light has passed through a filter of the corresponding color.

Of course, we don't always have ideal lighting conditions, and machine vision manufacturers have addressed this problem by changing from the rectangular RGB coordinate system to the more cylindrical HSI (hue, saturation, intensity) coordinate system. In the HSI coordinate system, even when lighting intensity changes, the hue coordinate of an object should stay relatively constant. Machine vision vendors often provide the ability to automatically convert an image from RGB to HSI coordinates.

However, there are some problems with this system, too. "Unsaturated" colors (colors in or near the range black-gray-white) do not have a well-defined hue, and colors whose hues differ by multiples of 360 degrees are identical. These problems can be reduced if the colors involved are reasonably well-saturated, if lighting can be arranged to eliminate glints and shadows in critical regions, and if the system's programmer allows for the angular ambiguity.

Technological Limitations

If it's so easy to represent and analyze colors, why have so many attempts to use machine vision for color recognition failed? And why have so many color vision systems proven so difficult to set up, calibrate and operate reliably?The answer is that few items meet the criteria for validity of the traditional methods. Relatively few objects are a single color. And even these items, unless they are flat, textureless and viewed under completely uniform diffuse light, will not present a uniform appearance to a camera. Most images of objects provide us with a great deal of information, including color, shape, texture and surface finish. Our brains are remarkably adept at correctly differentiating apparent color changes attributable to texture, orientation and lighting angle from the intrinsic color of the object. In most cases the information available is more than enough for a human to establish the required classification.

For a single-colored object whose position is accurately known and which exhibits no particularly dark shadows or glints, traditional color vision methods that set hue thresholds in HSI space work quite well. These methods have little difficulty differentiating a red truck from a green truck under ideal (often expensive) lighting conditions. The problem is that there are very few of these simple single-color applications, compared with the number of all potential color-based inspection applications.

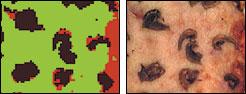

Consider a piece of fabric or an automotive fuse. Even under ideal lighting conditions, each of these objects exhibits more than one color. If the lighting is less than ideal, the range of apparent colors increases. With the introduction of topography, we may get glints and shadows. And, of course, additional apparent colors are introduced by the finite resolution of the sampling device, whether it's our eyes or a camera. This will tend to average colors at the boundaries, further distorting the statistics by introducing colors in the image that are not present in the object itself. So rather than talk about color recognition we should talk about color-based recognition.

Variance, and its square-root standard deviation, form the basis for traditional methods of estimating errors, most likely values, and optimum correlation between a reference and test image. These analysis methods may be appropriate for measuring single colors, but they are entirely inappropriate for analyzing items showing systematic departures from the mean. In other words, they are inappropriate for machine vision inspection of multicolored objects.

Simple thresholding techniques won't work well either. Thresholding relies on specifying boundaries in color space to separate one object from another. Obviously, any machine vision system that relies on this approach for general inspection limits its own use to a small subset of available problems where there is little, if any, color overlap. Many manufacturing engineers have learned to their sorrow that as the number of colors in an object increases, programming threshold-based systems can rapidly become very tedious.

New Methods

A new color analysis method based on information theory is promising to overcome these limitations. Called minimum description analysis, the method was first developed in the late 1970s.Inspection systems using the minimum description have several advantages. Because they require a large number of variables to handle complex color distributions, they must be trained by example. This virtually eliminates the need for tedious threshold-setting, which was required with traditional systems. More than a decade of experience with these systems has shown that their decisions usually correspond closely to those of a human trained on the same data set. The latter advantage should not be surprising, because like human pattern recognition, it uses no explicit thresholds, no color-space coordinate transformations, and is consistent with optimizing efficient information transfer.

The first color machine vision system based on minimum description was installed about 10 years ago. Today, these systems are being used in challenging applications in a variety of industries. In the automotive industry, these systems are often used to inspect interior components and fuse blocks.

For more information, about color machine vision for assembly inspection, call WAY-2C at 781-641-0605 or visit www.way2c.com.

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!